LLM Trends 2026: Agentic Workflows and the Rise of AI Infrastructure

In Life Sciences—and across industries—organizations are moving beyond experimental AI and LLM pilots toward production-scale implementations that deliver measurable business value. The LLM ecosystem is evolving rapidly, with specialized, single-capability applications powered by Agentic AI emerging as modular components within complex enterprise systems. This transition is accelerating the adoption of autonomous, agentic workflows and context-aware copilots embedded directly into core business processes.

INNOVATIONTECHNOLOGY TRENDLLM AND AI

Jisun Yang with support from ChatGPT and NotebookLM

2/17/20264 min read

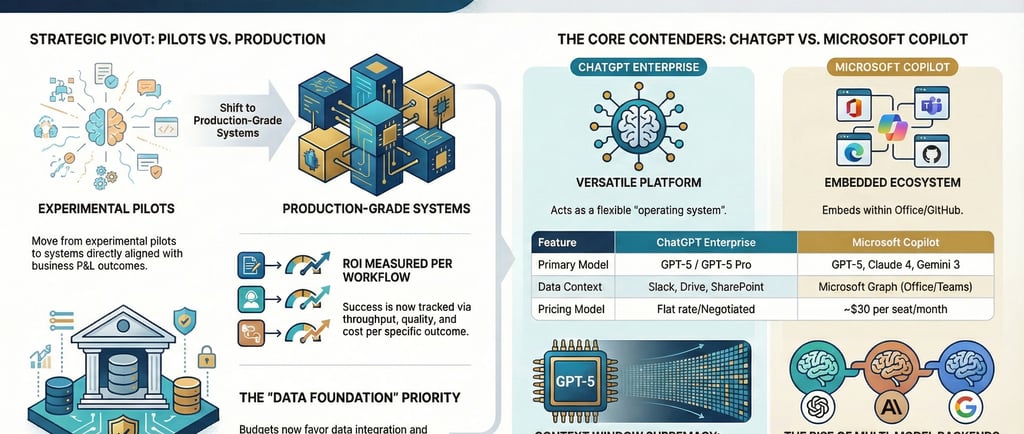

Enterprise AI is no longer defined by isolated pilots or experimental chatbots. The conversation is shifting from “What can LLMs do?” to “How do we operationalize them as infrastructure?”

In Life Sciences, Large Language Models (LLMs) are evolving from experimental interfaces into embedded, governed, production-grade components of enterprise architecture across R&D, clinical operations, manufacturing, regulatory, and pharmacovigilance. Competitive advantage will not come from deploying the most advanced model, but from building mature, compliant, and well-integrated AI ecosystems. Technical priorities are shifting toward hybrid deployment architectures and retrieval-augmented generation (RAG) patterns that protect sensitive clinical and patient data, ensure GxP alignment, and manage the high operational costs.

At the same time, a growing ecosystem of open-source and domain-specialized models enables life sciences organizations to balance performance with data residency requirements, regulatory constraints, and therapeutic-area specificity. However, innovation must be matched with disciplined oversight. Life sciences leaders must establish a comprehensive AI risk framework aligned with enterprise data strategy, AI strategy, and formal governance models. Such alignment addresses core challenges inherent in LLM capabilities—including accuracy variability, response inconsistency, and contextual reliability—while ensuring decisions remain scientifically and regulatorily defensible.

A well-architected FAIR (Findable, Accessible, Interoperable, Reusable) data foundation, combined with end-to-end traceability across models, prompts, training data, and outputs, is essential to close audit gaps in regulatory submissions and mitigate transparency risks in decision-support systems. Institutionalized validation protocols, continuous monitoring, model lifecycle management, and explainability standards transform AI from experimental tooling into compliant enterprise capability.

Ultimately, success in Life Sciences depends on moving beyond standalone chatbots toward secure, auditable, and deeply domain-aligned AI ecosystems that strengthen scientific rigor, support regulatory confidence, and accelerate compliant innovation.

LLMs are now services, it operates behind dashboards, inside ERP systems, within clinical platforms, and across supply chain operations. :

Embedded within enterprise platforms

Integrated with workflow engines

Connected to proprietary data ecosystems

Exposed securely through APIs and mobile applications

2. The Rise of Agentic Workflows

A defining shift is the emergence of agentic AI—systems capable of autonomous, multi-step task execution within clearly defined governance boundaries. They serve as context-aware copilots embedded directly into operational processes.Unlike static prompt-response systems, agentic workflows can:

Plan and sequence complex processes

Retrieve and validate enterprise data

Execute actions across applications

Monitor outcomes and iteratively refine results

For example:

A clinical copilot reviews protocol deviations and recommends mitigation pathways.

A finance agent analyzes forecast variance and generates scenario models.

A manufacturing agent monitors quality signals and initiates corrective workflows.

3. Retrieval-Augmented Generation (RAG) as Standard Architecture

Early LLM deployments exposed critical risks—hallucinated responses, reliance on outdated knowledge, limited source attribution, and insufficient traceability for regulated environments. Retrieval-Augmented Generation (RAG) has emerged as a foundational enterprise architecture to address these challenges. Rather than relying solely on static pretrained knowledge, RAG grounds model responses in authoritative, enterprise-controlled data sources.

Through RAG, organizations:

Connect LLMs to curated internal knowledge repositories and validated scientific content

Enable real-time, context-aware document retrieval

Enforce role-based access controls aligned with data governance policies

Preserve citation-level attribution and response-level audit trails

This architecture materially improves factual accuracy, strengthens governance and traceability, enhances explainability, and reduces the need for costly model retraining.

In life sciences and other regulated industries, RAG must operate on a well-architected FAIR (Findable, Accessible, Interoperable, Reusable) data foundation. End-to-end traceability across models, prompts, source documents, and generated outputs is essential to close audit gaps in regulatory submissions and mitigate transparency risks in decision-support systems. Institutionalized validation protocols, continuous monitoring, model lifecycle management, and formal explainability standards elevate AI from experimental capability to compliant, production-grade enterprise infrastructure.

4. Domain-Specific and Industry-Aligned and Governed Models

Operationalizing LLMs at scale introduces structural realities, including high inference costs, GPU dependencies, data residency requirements, and latency constraints. Enterprises are responding with hybrid deployment models that combine public cloud scalability, private cloud security, and on-premises control for sensitive data. In regulated industries like Life Sciences, AI success depends on domain-aligned models integrated with knowledge graphs, controlled vocabularies, ontology-driven prompting, and hybrid structured-unstructured reasoning. These approaches ensure context-aware, auditable, and scientifically defensible decision support.

AI infrastructure is more than models or tools—it is a layered enterprise capability that unites deployment, governance, and data management. Key elements include:

FAIR and governed data foundation – Findable, Accessible, Interoperable, Reusable data ensures traceability, regulatory compliance, and high-quality model inputs

Hybrid deployment architecture – cloud, private, and on-premises integration for scalability and data protection

Robust governance frameworks – clear ownership, validation, continuous monitoring, ethical oversight, and transparency

Traceability and auditability – end-to-end documentation across models, prompts, outputs, and decision-support systems

Intelligent interfaces – embedded dashboards, workflow automation, and mobile-first delivery

By designing AI as integrated infrastructure grounded in FAIR principles and strong governance, organizations move beyond experimental chatbots toward secure, scalable, and compliant ecosystems that accelerate scientific innovation and operational efficiency.

The Bottom Line: AI as Enterprise Infrastructure

The defining trend in LLM adoption is not about using the biggest or most complex model—it is about maturing AI into reliable, governed, and scalable enterprise infrastructure. Success depends on designing AI as an integrated capability that delivers real business value, protects sensitive data, and aligns with regulatory requirements.

Key elements of enterprise AI infrastructure include:

Agentic workflows – AI embedded into operational processes to plan, execute, and monitor tasks

RAG-based architecture – grounding AI outputs in trusted, auditable enterprise data

Hybrid deployment – combining cloud scalability, private security, and on-premises control for sensitive information

FAIR and governed data foundations – ensuring data is Findable, Accessible, Interoperable, and Reusable

Robust governance – ownership, validation, monitoring, ethical oversight, and transparency

Industry alignment – models and workflows tailored to regulatory, domain, and operational requirements

Rather than focusing on using the largest AI model available, enterprises succeed by choosing the right model for the purpose, balancing performance, cost, compliance, and operational efficiency. Organizations that move beyond experimental chatbots and build secure, context-aware, auditable AI ecosystems gain durable competitive advantage.

In the next article, we will explore what “AI infrastructure” truly means—how it differs from a standalone platform, the layers it requires, and how enterprises can design it as a strategic, integrated capability rather than a collection of isolated tools.